Unless you’ve been offline since Wednesday, you know that Medpedia has gone into public beta. I have a concern about the reliability of their model, based on my personal experience and the self-education I’ve been doing for the past year. I want to lay out the concern, my reasons, and a proposal.

It was a year ago that I first learned of the e-patient white paper, E-Patients: How They Can Help Us Heal Healthcare (available free in PDF or wiki), which lays out the foundational thinking for what we’re now calling participatory medicine. The Wikipedia definition of the term includes this:

Participatory medicine is a phenomenon similar to citizen/network journalism where everyone, including the professionals and their target audiences, works in partnership to produce accurate, in-depth & current information items. It is not about patients or amateurs vs. professionals. Participatory medicine is, like all contemporary knowledge-building activities, a collaborative venture. Medical knowledge is a network.

In this context, I’ll lay out my concerns.

First, I understand the need for evidence and the need to filter out flaky assertions.

Second, I’ve often said that I’m grateful for doctors – I owe them my life. There’s no way I would have dreamed up the high-dosage Interleukin-2 treatment that saved me. Plus, that treatment was wickedly toxic when it was first tried, and at this writing my hospital (Beth Israel Deaconess in Boston) hasn’t had a death in 11 years. It was superb doctors and nurses who made that happen.

However, what patients need from an online resource is reliable information on topics where they’re not experts. (The same goes for non-expert clinicians, as you’ll see below.) And my experience is that it’s an error to presume that doctors inherently have the best answer.

(Note: I did not say doctors are wrong! Read carefully. I said that presumption would be erroneous.)

For one thing, there’s the issue cited by David Servan-Schreiber, MD, in his book Anticancer: A New Way of Life. (He’s no slouch; among other things he brought Doctors Without Borders to the US.) He twice developed glioblastoma (a brain tumor) and beat it with surgery, chemo and radiation. Researching how he could reduce his odds of another recurrence, he found many papers that his colleagues hadn’t seen – and among the colleagues he found an unconscious attitude: “If it were real, we’d have heard about it.”

This view is understandable, but there are two massive problems:

- There’s way too much information coming out for anyone to keep up. Donald Lindberge, director of the National Library of Medicine, explains “If I read and memorized two medical journal articles every night, by the end of a year I’d be 400 years behind.” (White paper, chapter 2, conclusion #6.)

- “The lethal lag time” (Chapter 5): Even after research has produced a firm new result, there’s a delay (“latency,” in Internet engineering terms) before the information reaches physicians’ hands in the real world. How long a delay? Two, three, up to five years. People die during that lag time – people whose lives might depend on that latent information.

And the thing is, while doctors face incessant pressure on their time, patients (and their suppporters) with a rare disease have all the time in the world to go deep in their search for the latest information.

Now, you (personally) might be considering these problems in the abstract – “How often does that happen? And besides, yeah, medicine is hard – people do die.” But if there’s one universal I’ve learned in my year of research, it’s this: people get radicalized when it gets personal. When it’s your life, your child, your mother, and they’re in peril, it matters whether the info you’re reading really is current, up-to-date, the best possible.

I’ll use my own condition (stage IV, Grade 4 renal cell carcinoma) as an example. For this disease, Interleukin is the only treatment that produces anything like a cure; any other treatment merely slows down its progression. Yet on my ACOR.org kidney cancer listserv we frequently encounter a new member whose local oncologist didn’t tell him/her that Interleukin even exists as a treatment, or might have mentioned it but discouraged it.

Why?

- Many patients say their doc gave them info that was years out of date. See, Interleukin doesn’t work for most patients; when I first researched it, different sites said the response rate was 7% or 13%. But my ACOR group told me it’s up to 20% – which my hospital confirmed. Today it’s up to 25% there – but patients whose lives are on the line are still being told it has too little chance of success to be worth the risk.

- Regarding the risk, those doctors often cite the treatment’s toxicity. In my Web research I’d read that the side effects are “often severe and rarely fatal.” What I did NOT read, anywhere, is that a specialist hospital doesn’t have deaths anymore. (Mine hasn’t had a death in 11 years.) Those hospitals have learned how to handle the toxicity; what a patient needs to hear is get yourself to a specialist hospital.

- But try finding that statement on any medical web site. And if you do, see if it’s expressed as directly as patients say it to each other.

- Worse, we keep hearing that patients at one major cancer center are routinely not even told Interleukin exists. Some people speculate it’s a competitive thing, because that center doesn’t offer Interleukin and another center across town does. How’s that for instilling trust in doctors as the authoritative source? (But regardless of the reason, we keep hearing it happens.)

- To return to the “when it gets personal” issue: if your mother’s life were at stake with this diagnosis, how would you feel if that option were not offered to you – for any reason? Wouldn’t you want to know every available option? I sure did.

The e-patient white paper has numerous other examples of cases where experts said one thing and patients took matters into their own hands and, through one method or another, developed solutions that beat what the experts said was possible.

My question is, what would Medpedia’s editors have done in each of those situations? And what would have been the outcome in any of those cases if patients had submitted edits reflecting what they’d learned, but the approved editors didn’t agree with the patient’s view?

Said differently, who will vet the vetters?

I have a suggestion, which arose Friday night in a Twitter discussion with a clinician from another country. (Ain’t social media great??) Among other things, she cited the good that Medpedia could do for doctors in remote areas, or GPs researching diseases they don’t often see. I love that! Especially for diseases like mine, where the most current life-saving info is changing rapidly.

So I propose:

- Patients and clinicians should be able to write comments on Medpedia articles, just as they can review books on Amazon or write letters to the editor of a medical journal. This would leverage the power of Web 2.0, just as it does on Amazon and countless other communities. And just as with blog comments, motivated participants could post as much new information as they want.

- Patients should be able to mark an article as being helpful or not, as on Amazon:

This would give the editors feedback on whether their lay readers found the resulting article useful. - Clinicians should be able to do the same, assessing the piece’s usefulness from a clinician’s perspective.

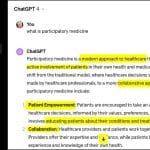

Articles would thus have publicly visible feedback to the editors, from both patients and clinicians. (See Amazon readers’ feedback on reviews of Servan-Schreiber’s book.) Both groups, clinicians and patients, would be able to add content of their own; and people in need could see which pieces others had found useful:

As shown in this example, reviewers (and probably clinicians and editors) would eventually gain their own reputations in the community as highly useful people. (This example happened to contain an Amazon “Top 100 Reviewer.”) Many Web 2.0 observers have noted that reputation has become a key factor in the success of sites like Amazon and eBay.

What do you think? Could an approach like this address the concerns I expressed above?

Some people have said it’s simply not viable for approvals to be limited to doctors, and others have conversely said it’s unrealistic to think patient feedback could be authoritative. Isn’t there room for participation, for mutual contribution?

Whatever approach you want, who will vet the vetters?

Interesting that this post is now drawing pingbacks from elsewhere.

The link in the previous comment, from Health Content Advisors, has an added note: “My further comment: the patient-created content also needs to be “peer-reviewed”, organized and aggregated in a way that leads to useful analysis by professionals and patients/consumers.”

I, for one, certainly agree. One of the key “Seven Preliminary Conclusions” in the e-patient white paper is #7: the best to improve medicine is to make it more collaborative… more participatory.

Is it definitely accurate to say that Dr. Servan-Schreiber had glioblastoma? That would give hope to so many people, but this is the only site/cite I can find that doesn’t just say “bran cancer’ or “brain tumor.”

Is it acknowledged anywhere? It may be that he is intentionally vague about the type he survived in order to give hope to more people.

Hi Lisa – I don’t have the book with me here at work but I listened to the entire thing on CD twice and I’m almost certain that’s what he said. I also bought the book (can you tell I liked it?) so I’ll check it when I get home tonight.

Here are some links meanwhile – as you say they’re not definitive.

https://www.cancercompass.com/message-board/message/single,32936,3.htm

http://www.healthyhints.org/

http://extratv.warnerbros.com/2009/01/lifechanger_–_david_serven_sc.php

If you don’t hear back by midnight, nag me again. :–)

Lisa, sorry, I forgot I loaned the book out, so I can’t look it up tonight. I’ll do what I can to get the answer over the weekend. Or perhaps you can go into a bookstore and look in the book’s index to see if the word is in it.

Hello again and thak you for looking for this. My friend that wanted the answer so badly has the book herslef and just hasn’t come across it yet. As she said, though, it doesn’t really matter, every bit of advice he gives is good for all of us and following some of his suggestions will allow her to more actively participate in her treatment. The CD is great. I make everybody listen to it. I just have to start practicing it myself now.

thank you so much for your time. I hope you have a good night! Lisa